I’m not ashamed to admit I’m on the older side, at least in terms of the staff at HawkWatch International. With nearly 20 years at the organization, I’ve seen a lot of changes. These days, it feels like the term “AI” (artificial intelligence) is everywhere: it’s used for web searches, writing, image and video creation and recognition, to assist with work tasks, and more. Perhaps because of my age, I’ve been largely reluctant to use AI in most mainstream ways and have many misgivings about it. It can be very energy-intensive. It can hamper critical thinking and creativity. Personally, I have a lot of concern about how it is being used to create fake images and videos of “wildlife encounters” that skew people’s perceptions of the natural world. It’s hard not to feel like we’re going over a cliff, in terms of what we can and cannot believe with the pervasive use of AI.

So it’s strange to find myself writing a blog on how we do use AI in our eagle research. But here we are. The background on how we found ourselves using AI is actually quite simple: once we started using motion-sensitive cameras in our work on eagle vehicle strike, nest monitoring, and eagle winter ecology programs, it wasn’t long before we fell woefully behind on the review of the millions of images we could acquire in a short time. As of this writing, we have over 13.5 million total images from “camera traps” and drones. While you’ve been reading this, there’s a very good chance our cameras have acquired even more images! The images we gather help us understand the issues and threats facing eagles, like how likely they are to flush from roadkill at different distances from the road, which can then be translated into conservation action (Slater et al. 2022). If we can’t review the images we acquire efficiently, we risk missing real-time conservation opportunities. It was the logical next step for our research programs to begin utilizing AI photo classification models that have come into their own in the past few years.

Wildlife biologists aren’t always on the cutting edge of technology, so we are very fortunate that a former board member, Jeremy Hanks, is a leader in the tech industry. He connected us with the expertise we needed and provided funding via the Jeremy & Amy Hanks Foundation to pilot this effort. I won’t go into all the modeling details, as I’d need to phone that expert to do so. In a nutshell, we provide the AI with images humans have labeled (e.g., “Golden Eagle”) to train a classification model, and the model is run on a larger test dataset to make predictions. Then we review the prediction successes and failures to identify what additional training images are needed, and repeat the process through many iterations.

Although image recognition models are fairly common in the wildlife science world, we are innovating the use of AI in two different ways. First, our “live eagle” model not only recognizes eagles and other raptors but also looks for leg bands to help us identify individuals we’ve previously “marked”. This is really important to our research on winter survival, for example, as it allows us to track the weights of individual birds over the winter months, from year to year, and more. Our model is still being refined and applied to new datasets (for example, we recently ran the model on 1.6 million images acquired at water features in the West Desert in Utah to look for eagles and bands), but it is already very good at recognizing Golden Eagles and bands in our own datasets.

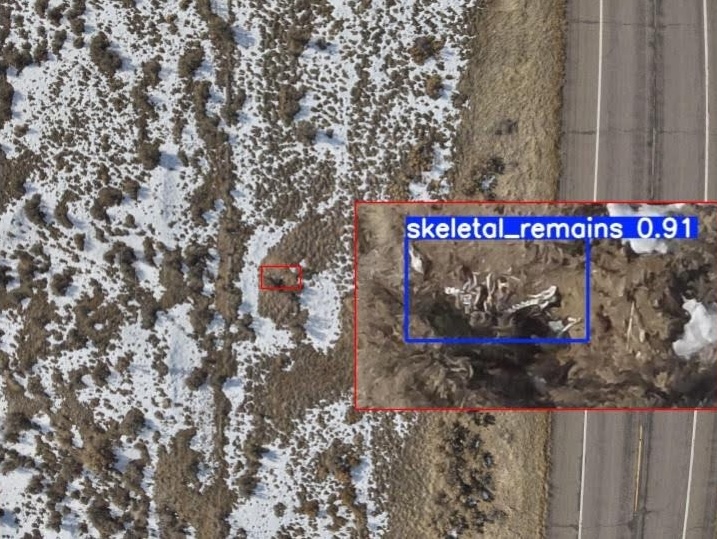

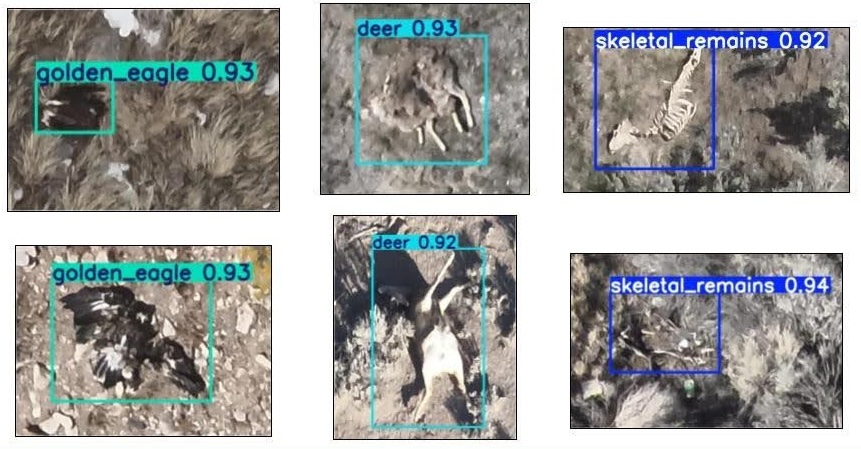

Another way our AI image recognition is innovative is that we are training a model to recognize roadkill as part of our efforts to reduce eagle-vehicle collisions. The model training process is similar to what I’ve already described, except that the roadkill images are acquired by flying drones along the “right-of-way” of highways, and finding actual roadkill in images is a bit like looking for a needle in a haystack. It took us quite a while to acquire enough training images through human review of tens of thousands of drone images, but we are very happy with the initial model performance. The model can recognize dead deer, skeletal remains, and dead Golden Eagles quite well, which will help us to more rapidly survey and prioritize our roadkill relocation efforts to reduce eagle collisions.

While all of this is very exciting, you might be wondering about the potential negative impacts of using AI in this way. As mentioned at the beginning of this blog, I have many concerns about AI myself, and here I speak only about our experience using AI in the ways described.

First, and perhaps most importantly, our AI models are trained on and applied to hand-picked datasets, so they are not as energy-intensive as models that scour large swaths of the internet. For example, one training run of our model uses about as much energy as one washing machine run or eight hours of desktop computer use, if you prefer. Once the training is complete and the model is ready for use, our AI models can review ~one million images using the same amount of energy. While training our roadkill AI model, we used 970 human labor hours to review a mere 17,655 images, partly because each image was reviewed by at least two separate people for quality control. Based on this one example, you get the picture that AI is vastly more energy efficient than having people review all 13.5 million photos we currently have on hand.

Another main criticism we’ve heard about using AI the way we are is that it takes jobs away from entry-level biologists. As noted above, our experience is that humans are still very necessary to label training images and to review and correct the AI model outputs. Additionally, most of those involved in reviewing the roadkill imagery found it quite tedious and unrewarding. Given the volume of imagery we are acquiring, it simply isn’t humanly possible to continue reviewing every image with human eyes, but humans are still critical for the always-important jobs of model training and quality control.

It is a new world out there, and as always, we are doing our best to adapt and change as thoughtfully as possible. Thanks for reading…hopefully, you didn’t resort to an AI summary!

This blog was written by Dr. Steve Slater, HWI’s Conservation Science Director. You can learn more about Steve here.